Complex Analysis

Fall Semester 2018-19

Department of Mathematics and Applied Mathematics

University of Crete

Teacher: Mihalis Kolountzakis

▶ Announcements

Αν θέλετε να δείτε το γραπτό σας μπορείτε να το κάνετε τη Δευτέρα 23 Σεπ. 2019, ώρα 9-10, στο γραφείο μου Γ 213.

Αν θέλετε να δείτε το γραπτό σας μπορείτε να το κάνετε τη Δευτέρα 23 Σεπ. 2019, ώρα 9-10, στο γραφείο μου Γ 213.

20-9-2018: Το μάθημα θα διδαχθεί στα Αγγλικά μια και στο ακροατήριο θα υπάρχουν 1-3 φοιτητές από το πρόγραμμα Erasmus. Θα γίνει με τέτοιο τρόπο ώστε με μια στοιχειώδη γνώση Αγγλικών να μην έχει κανείς πρόβλημα να το παρακολουθήσει. Το κυρίως σύγγραμα (Bak & Newman) υπάρχει και στα Αγγλικά και όλες οι ασκήσεις και τα διαγωνίσματα θα είναι και στις δύο γλώσσες.

▶ Time Schedule

Room Α 208. Monday 11-1, Friday 9-11.

Teacher office hours: Monday 9-11, Γ213.

▶ Course description

Goals: Introduction to the basic techniques of Complex Analysis, primarily from a computational standpoint.

Content: Topology of the complex plane. Analytic functions, contour integrals and power series. Cauchy theory and applications.

▶ Books and lecture notes

▶ Student evaluation

▶ Class diary

We covered, more or less, § 1.1 and 1.2 of [BN]. We talked about the properties of complex numbers, how we add and multiply them, their conjugate, their polar form and how the polar form interacts with multiplication. The property \[ \cis(\theta_1) \cdot \cis(\theta_2) = \cis(\theta_1+\theta_2) \] is particularly important (here \( \cis\theta \) is shorthand for \( \cos\theta+i\sin\theta \)). We showed how to compute the roots of the general quadratic equation \[ a z^2 + b z + c = 0 \] and also how to find the square roots of a given complex number and also how to find the three cubic roots of 1 (that is, all solutions to the equation \(z^3=1\)).

Problems:

To prepare for Friday please do problems 1-3 from [BN, Chapter 1].

(

1-3

)

We spoke about how to interpret geometrically (as parts of the complex plane) several algebraic descriptions of sets of complex numbers (such \(\Set{z:\ \Abs{z-1-i}\lt 2}\) or \(\Set{z: \Re{z}\gt 0}\)).

We saw why the fact that a number cannot have more than \(n\) roots of order \(n\) is a consquence of polynomial division: If \(A(z), B(z)\) are two polynomials with complex coefficients then there are unique polynomials \(Q(z)\) (the quotient) and \(R(z)\) (the remainder) such that \[ A(z) = Q(z) B(z) + R(z),\ \ \text{ and } \deg R(z) \lt \deg B(z). \] Applying this to the polynomial of degree one \[ B(z) = z - z_0 \] we obtain that if \(A(z_0)=0\) (we say that \(z_0\) is a root of \(A(z)\)) then \[ A(z) = (z-z_0) Q(z),\ \ \ \text{ for some polynomial } Q(z), \] and it is easy to see that \(\deg Q(z) = \deg A(z)-1\). Repeating this proves that any polynomial of degree \(n\) can have \(\le n\) roots (we will see later that it always has \(n\) complex roots) which, in turn, implies that a complex number can only have \(\le n\) roots of order \(n\). Of course, we saw, using the polar form of complex numbers, that there are exactly \(n\) such roots and we described them completely.

The \(n\)-th roots of the non-zero complex number \(z = r\cdot \cis\theta = r(\cos\theta + i \sin\theta)\) are the \(n\) numbers \[ r^{1/n} \cis\left(\frac{\theta}{n}+k\frac{2\pi}{n}\right),\ \ \ \text{ where } k=0, 1, 2, \ldots, n-1. \] These numbers form a regular polygon of \(n\) sides inscribed in the circle \(\Set{\zeta:\ \Abs{\zeta}=r^{1/n}}\).

Next, we started talking about the topology of \(\CC\) as a metric space. We reminded the definition of a Cauchy sequence in a metric space and proved that \(\CC\) is a complete metric space (here we assumed the fact that \(\RR\) is a complete metric space). We spoke about open and closed sets in \(\CC\) and saw several examples.

Problems:

Please do problems 4, 5, 10, 11, 12, 13 from [BN, Chapter 1].

(

4-5

10-13

)

We continued our discussion of convergence and continuity for complex numbers and functions of complex numbers. We also discussed the concept of connectivity and polygonal connectivity [BN, § 1.3]. These two concepts are identical for open sets \(D \subseteq \CC\) and such sets we call regions from now on (i.e. a region is an open connected set).

Next we talked about the convergence of series of complex numbers \[ \sum_{n=0}^\infty z_n \] and our emphasis was in power series, centered at 0 \[ \sum_n a_n z^n \] or centered at \(a \in \CC\) \[ \sum_n a_n (z-a)^n. \] This discussion is essentially a repetition of what we have learned about power series in our (real) analysis courses, the only difference being that instead of an interval of convergence centered at \(a\) we now have a disk of convergence again centered at \(a\). You can read the relevant material in [BN, § 2.2]. The main theorem about power series is Theorem 2.8 in there. Please read also carefully the example applications that follow that theorem. About absolute convergence you can read in [BN, § 1.3].

Problems:

Please do problems 7, 8, 9, 12, 13 from [BN, Chapter 2].

(

7-9

12-13

)

Today we defined what it means for a function \(f:\CC\to\CC\) to be differentiable at a point \(z \in \CC\). The definition is completely analogous to the one for real functions: \(f\) is differentiable at \(z\) if the limit \[\frac{f(z+h)-f(z)}{h}\] exists as \(h \to 0\). If the limit exists we call it the derivative of \(f\) at \(z\) and denote it by \(f'(z)\). Here \(h\) is a complex quantity so it can tend to 0 in many different ways, and that is why this notion of differentiability is, in a sense, rare. For instance the functions \(f(z) = \overline{z}\) and \(g(z) = \Abs{z}^2\) are NOT differentiable (but, for instance, all polynomials of \(z\) are differentiable).

We then showed for a function \[ f(z) = f(x+iy) = u(x+iy) + i v(x+iy) \] to be differentiable at \(z = x+iy\) a necessary condition is that the so-called Cauchy-Riemann equations \[ u_x = v_y,\ \ u_y - v_x \] are satisfied (here \(u = \Re{f}, v = \Im{f}\) and \(\cdot_x, \cdot_y\) denotes partial differentiation).

We then proved that if \(f(z)\) is given by a power series \[ f(z) = \sum_{n=0}^\infty a_n (z-a)^n \] in its circle of convergence \(\Set{z:\ \Abs{z-a} \lt R}\) then \(f\) is differentiable in that disk and the derivative is also given by a power series with the same radius of convergence \[ f'(z) = \sum_{n=1}^\infty n a_n(z-a)^{n-1}.\] Iterating this theorem we conclude that any power series is infinitely differentiable in its circle of convergence. We also derived the following formula that connects the coefficients of the power series with the values of its derivatives at the center: \[ a_n = \frac{f^{(n)}(a)}{n!},\ \ \ n=0, 1, 2, \ldots. \]

Read [BN, Chapter 2] and parts of [BN, § 3.1]. We did not say anything about § 2.1 but you can read it yourselves.

Problems:

Please do problems 1-3 from [BN, Chapter 3].

(

1-3

)

We first proved a uniqueness theorem [BN, Theorem 2.12] for power series: if $z_k \to a$ is a sequence of complex numbers, different from $a$, which converge to $a \in \CC$ and the power series $$ \sum_{n=0}^\infty a_n (z-a)^n $$ is equal to $0$ at each $z_k$ then $a_n = 0$ for all $n=0, 1, 2, \ldots$. A consequence of this is that if two power series are identical (as functions) on a disk around their common center then they have the same coefficients (hence the name uniqueness).

We then mentioned, without proof, the theorem that say that the Cauchy-Riemann conditions together with continuity of the partial derivatives guarantee complex differerentiability [BN, Proposition 3.2].

Afterwards we mentioned two properties of analytic functions that are unusual and do not hold for functions of a real variable, even if they have infinitely many derivatives [BN, Prop. 3.6 and 3.7]: an analytic function with constant real part or with constant modulus is necessarily constant.

Then we defined the entire functions $e^z, \cos z$ and $\sin z$, which extend, for $z \in \CC$, the well known functions of a real variable $e^x, \cos x$ and $\sin x$. We examined several properties of these funtions, and especially their range (which values the take, when $z \in \CC$) and their periodicity (a function $f:\CC\to\CC$ is called periodic with period $T \in \CC\setminus\Set{0}$ if $\forall z:\ f(z+T)=f(z)$). We saw that for each $\theta \in \RR$ we have $$ \cis{\theta} = e^{i\theta}, $$ so, from now on, we will not use the notation $\cis\theta$ anymore but use $e^{i\theta}$ instead [BN, § 3.2].

Problems:

Please do problems 18, 20 from [BN, Chapter 2] and 2, 5, 6, 7 from [BN, Chapter 3].

(

18-20

2

18-20

)

We derived (using Taylor's theorem) the power series for the function $e^x$, for real $x$, $$ e^x = \sum_{n=0}^\infty \frac{x^n}{n!}, $$ which converges for all real $x$. We then proved that the function $$ f(z) = \sum_{n=0}^\infty \frac{z^n}{n!} $$ (we can prove that this power series converges for all $z \in \CC$), which agrees with $e^x$ for $z \in \RR$, satisfies the functional equation $$ f(z+w) = f(z) f(w). $$ We had proved last time that there is precisely one function $f(z)$ with this property which extends $e^x$ to the complex plane, and we called this function $\exp(z)$ or $e^z$. Therefore we have obtained the power series representation of $e^z$: $$ e^z = \sum_{n=0}^\infty \frac{z^n}{n!} $$ valid for all $z \in \CC$. Using our definition for the functions $\cos{z}$ and $\sin{z}$ $$ \cos{z} = (e^{iz}+e^{-iz})/2,\ \ \ \sin{z} = (e^{iz}-e^{-iz})/(2i), $$ we therefore obtained the power series representation of these functions. We also observed that since $\cos{z}$ is an even function (i.e., $\cos(-z) = \cos{z}$) the implication is that all odd powers of $z$ should be missing from the power series of $\cos{z}$. Similarly all even powers are missing from the power series for $\sin{z}$. (This is a simple consequence of the uniqueness theorem for power series.)

We then moved on to [BN, Chapter 4] and defined contour (or line) integrals of the form $$ \oint_{C} f(z)\,dz, $$ where $C$ is a curve in the complex plane given by a parametrization $z(t)$, where $t$ belongs to some interval $[a, b]$. We did several examples of such integrals. Please read [BN, § 4.1] up to Prop. 4.11.

Problems:

Please do problems 14-18 from [BN, Chapter 3] and 2, 3, 6 from [BN, Chapter 4].

(

14-18

2-3

6

)

First we remembered a basic limit theorem for integrals (essentially the same as we have seen for real functions), [BN, Theorem 4.11], which says that if the functions $f_n$ converge to the function $f$ on the curve $C$ then $$ \oint_C f_n(z) dz \to \oint_C f(z) dz. $$ This we will use several times from now on.

We then proved [BN, Theorem 4.12], which says that if a function $f$ is the (complex) derivative of another function $F$ (at least on the curve of integration, i.e. $F'(z) = f(z)$ for all $z \in C$) then $\oint_C f(z)\,dz = 0$. This is very easy and is essentially just the fundamental theorem of calculus ("the intergral of the derivative is the function values at the endpoints").

Our goal then was to prove, for the special case of curves which are rectangles, the so-called Closed Curve Theorem [BN, Theorem 4.14], that if $f$ is an entire function and $R$ is the boundary of a rectangle, then $$ \oint_R f(z)\,dz = 0. $$ This we accomplished and stopped just before proving [BN, Theorem 4.15].

Problems:

Please do problems 5, 7 from [BN, Chapter 4].

(

5-7

)

We proved [BN, Theorem 4.15] that if a function $f(z)$ is entire then there exists another entire function $F(z)$ such that $\frac{dF}{dz}(z) = f(z)$ for all $z \in \CC$. This imples [BN, Theorem 4.16] thet $\int_C f(z)\,dz = 0$ for any closed curve $C$ if $f$ is entire. In fact it is not absolutely necessary for $f$ to be analytic everywhere just in the "inside" of the curve $C$ and on $C$ itself. What is the inside may not be obvious if the curve is self-intersecting but even in these cases we can usually cut up the curve $C$ into a finite number of simple (i.e., not self-intersecting) close curves $C_j$ and then it becomes clear what the inside is.

We then proved (and this is not in [BN] at this location) that if $C_1$ is a simple closed curve inside $C_2$ (another simple closed curve) and $f(z)$ is analytic in the region inside $C_2$ and outside $C_1$ then $$ \oint_{C_1} f(z)\,dz = \oint_{C_2} f(z)\,dz. $$

Using this last result we saw that if a function is analytic everywhere except at a point $a \in \CC$ where it is assumed to be continuous then the closed curve theorem holds for that function as well. From this we deduced the very umportant Cauchy Integral Theorem, which says that if a function is analytic in and on $C$, a simple closed curve, then for any point $z$ inside $C$ we have $$ f(z) = \frac{1}{2\pi i}\oint_C \frac{w \,dw}{w-z}. $$ This is [BN, Theorem 5.3], only in your book the result is stated only for a circle curve $C$.

Using Cauchy's Integral Theorem we proved that if a function is analytic in and on the circle $\Set{\Abs{z-a}=r}$ then inside that circle we have the Taylor series expansion (power series) $$ f(z) = \sum_{n=0}^\infty C_n (z-a)^n, $$ therefore, by the properties of power series that we have seen, $f$ is differentiable infinitely many times and $C_n = \frac{f^{(0}(a)}{n!}$. This implies is analytic at a point $z$ (this means that it is differentiable in a neighborhood of $z$) then it has derivatives of arbitrary order in a neighborhood of $z$.

In the proof of this last result we deduced the formula $$ f^{(n)}(z) = \frac{1}{2\pi i} \oint_C \frac{f(w)\,dw}{(w-z)^{n+1}} $$ where $C$ is any simple closed curve around $z$, $f$ is analytic in and on $C$ and $n \ge 0$.

Problems:

Please do problems 1, 2, 3, 6 from [BN, Chapter 5].

(

1-6

)

After remembering the important facts that we learned last time we computed some example contour integrals using Cauchy's integral theorem such as the integrals $$ \oint_C \frac{e^z}{z-1}dz,\ \oint_C \frac{e^z}{(z-1)(z+1)}dz,\ \oint_C \frac{e^z}{(z-1)^2}dz,\ \oint_C \frac{e^z}{(z-1)^2(z+1)}dz, $$ where $C$ is a simple closed curve that contains $\pm 1$ in its interior (for instance $C$ could be the circle $\Abs{z}=4$). The fact that we have the function $e^z$ in the numerator is not important. We could have any analytic function. We learned the trick of replacing the curve $C$ by two (or more) curves that contains the singularities in their interior but are small enough to contain one singularity each. We also saw that sometimes we can compute an integral using the so-called expansion into partial fractions.

Then we proved that if $C$ is a simple closed curve and $\phi:C \to \CC$ is any bounded function then the function defined by $$ f(z) = \oint_C \frac{\phi(w)\,dw}{w-z} $$ is analytic both inside $C$ and outside $C$ (no claim is made about the function on $C$). This allowed us to remove bounded singularities from a function: if $f$ is analytic in a neighborhood of point $z$ (but not necessarily on $z$ itself) and is bounded in a neighborhood of $z$ then we can redefine it on $z$ (by the formula $f(z) = \oint_C \frac{f(w)\,dw}{w-z}$, for $C$ a small enough circle containing $z$ in its interior) so that it is analytic on $z$ as well. Such a singularity (a place, that is, where $f$ is not analytic) is then justly called a removable singularity.

Next we proved that if $f$ is analytic at $z_0$ and vanishes at $z_0$ $$ f(z_0)=0 $$ then there exists an analytic function $g(z)$ such that $$ f(z) = (z-z_0)g(z), $$ a property that we knew, so far, for the class of polynomials. If it so happens that $g(z_0)=0$ too then we can repeat this and write $f(z) = (z-z_0)^2 h(z)$, where $h(z)$ is an analytic function. This can continue if $h(z_0)=0$ and in this manner we can write $$ f(z) = (z-z_0)^k g_k(z), $$ for some natural number $k$. The highest such number possible is called the order of the zero $z_0$ of function $f(z)$. The fact that there is indeed a highest such number $k$ and the process cannot continue indefinitely, unless of course $f(z) \equiv 0$ identically, is due to the following fact: if $f(z) = (z-z_0)^k g_k(z)$ then we can easily prove that $$ 0 = f(z_0) = f'(z_0) = f^{(2)}(z_0) = \cdots = f^{(k-1)}(z_0), $$ so if the process continued indefinitely then all derivatives of $f$ at $z_0$ would be $0$. But these derivatives are the coefficients of the Taylor series of $f$ centered at $0$, therefore $f$ is identically zero, at least in a circular neighborhood of $z_0$.

During the first hour we covered [BN, § 5.2] proving first Liouville's Theorem, then using it to prove the Fundamental Theorem of Algebra, that every polynomial has a root in $\CC$, and, as a consequence of that, every polynomial of degree $n$ has precisely $n$ roots in $\CC$ (not all of them need be different) and, so, such a polynomial $$ f(z) = a_0 + a_1 z + a_2 z^2 + \cdots + a_n z^n $$ can always be written in the form $$ f(z) = a_n (z-\rho_1) (z-\rho_2)\cdots(z-\rho_n), $$ for some numbers (the roots) $\rho_1, \rho_2, \ldots, \rho_n \in \CC$. We then proved the generalized Liouville theorem [BN, Theorem 5.11] which says that if $f$ is entire and satisfies an inequality of the form $$ \Abs{f(z)} \le A + B \Abs{z}^k, $$ for some nonnegative integer $k$ and two constants $A, B \ge 0$ then $f$ is necessarily a polynomial of degree $\le k$. This we proved using the basic Liouville theorem (every bounded entire function is a constant) which is just this theorem for $k=0$, and induction on $k$.

During the second hour we solved some problems (mostly from the list I distributed on Monday) and answered several questions.

Today we proved the so-called Cauchy estimates which bound the derivatives of an analytic function at a point via the size of the function on a circle around that point. More specifically if function $f$ is analytic in an on the circle $C$, centered at $a \in \CC$ and of radius $R$, and if the function $f$ satisfies $$ \Abs{f(z)} \le M,\ \ \ \text{for $\Abs{z-a}=R$}, $$ then we have for all $n=0, 1, 2, \ldots$ $$ \Abs{f^{(n)}(a)} \le \frac{n! M}{R^n}. $$

Next we proved that all zeros of analytic functions are isolated: if $f$ is analytic at a point $a$ and $f(a)=0$ then there is $r>0$ such that the only zero of $f$ in $\Set{z:\ \Abs{z-a} \lt r}$ is $z_0$, unless $f$ is identically 0. In other words, if $f$ is not identically 0 then one cannot have a sequence of different numbers $z_n \in \CC$ converging to a point $z \in \CC$ where $f$ is defined and analytic and such that $f(z_n)=0$ for all $n$. As a corollary of this, if we have an analytic function $f$ which vanishes on a curve in the complex plane then it vanishes everywhere. Put differently, if $f$ and $g$ are both analytic on a cuvre $C$ and equal on $C$ then $f=g$ everywhere. This phenomenon of analytic continuation is valuable and can prove several identities by extending them from the real case. For instance, consider the entire function $\sin^2z + \cos^2z - 1$ which happens to be 0 on $\RR$ as we know since high school. Analytic continuation then implies that the identity $$ \sin^2z+\cos^2z=1 $$ holds for all $z \in \CC$.

During the second hour we solved some problems (mostly from the list I distributed on Monday) and answered several questions.

Today we solved the remaining problems for the list distributed a week ago.

Today we had our midterm exam. The questions can be found here in Greek and in English.

We first went over the midterm exam problems. Then we proved the maximum principle for analytic functions: if $f$ is analytic at a point $z$ and $\Abs{f(z)}$ has a local maximum at $z$ then $f$ is constant in a neighborhood of $z$. The corresponding minimum principle is this: if $f(z) \neq 0$ and $\Abs{f(z)}$ has a local minimum at $z$ then it is a constant in a neighborhood of $z$. If $f$ is defined and analytic at a connected set $D$ then, under the same assumptions, $f$ is a constant in $D$.

We saw a quick application of this to the problem: maximize $\Abs{z^2+1}$ in the set $\Abs{z} \le 1$. The maximum principle allow us to reduce this optimization to the boundary points $\Abs{z}=1$ where it is easily solvable after parametrizing the circle.

Problems:

Please do problems 4, 6, 8, 9 from [BN, Chapter 6].

(

4-9

)

During the first hour we talked about connectivity, restricting ourselves to open subsets of $\CC$. We defined a connnected set to be a set any two points of which can be connected by a polygonal line which is contained entirely in the set. For any open set $D \subseteq \CC$ and point $x \in D$ we defined its connected component to be all points $y$ which can be joined to $x$ via a polygonal line contained in $\CC$. The set $D$ is connected if and only if it has only one connected component. We proved that connected components (in open sets) are always open and concluded with a proof of the equivalence of a set $D$ being connected to not being able to partition $D$ into open sets. In other words, $D$ is NOT connected if and only if we can write $D = A \cup B$ with $A$ and $B$ being open sets and $A \cap B = \emptyset$.

Then we returned to the Uniqueness Theorem [BN, Theorem 6.9] and redid its proof properly. We had only proved that the analytic function which has a convergent sequence of zeros is 0 in a neighborhood of the limit point and we now extended the result to the function being 0 to the entire connnected component of that limit point. If the set where the function is defined is connected then the function is 0 everywhere on that set.

During the second hour (and part of the first) we proved the Open Mapping Theorem [BN, Theorem 7.1] and Schwarz's Lemma [BN, Lemma 7.2]. We also introduced bilinear maps, i.e. functions of the type $$ \phi(z) = \frac{az+b}{cz+d}, $$ where $a, b, c, d \in \CC$ are constants. In particular we dealt with bilinear functions of the form $$ B_\alpha(z) = \frac{z-\alpha}{1-\overline{\alpha}z},\ \ \ \text{for $\Abs{\alpha}<1$}. $$ These functions are defined for $z \neq 1/\overline{\alpha}$, hence they are defined on the unit disk and for $\Abs{z}=1$ we proved that $\Abs{B_\alpha(z)} = 1$. By the maximum principle this implies that $B_\alpha$ maps the unit disk into the unit disk. We used such functions to do Example 1 of [BN].

Problems:

Please do problems 1, 5, 6, 7, 8, 9 from [BN, Chapter 7].

(

1-9

)

Today we learned a few more things about Möbius transformations, i.e. functions of the type $$ B_a(z) = \frac{a-z}{1-\overline{a} z},\ \ \text{ where $\Abs{a}<1$.} $$ These functions are automorphisms of the open unit disk, i.e. they are analytic, one to one and onto. We also proved that any automorphism of the open unit disk is of essentially the same form, i.e. of the form $$ e^{i\theta} B_a(z), $$ for some real $\theta$ and some $a$ with $\Abs{a}<1$. Functions of this form form a group (we showed this).

We showed how to use Möbius transformations to extend Schwarz'sLemma to functions that map $a \to 0$. We also started doing problem 5 of [BN, Chapter 7] but we did not finish this.

We finished problem 5 from [BN, Chapter 7], which is a good problem that combines much of what we have learned recently.

Next we proved [BN, Theorem 7.3], which is a Liouville-type theorem. We then proved Morera's theorem [BN, Theorem 7.4], which is, in a sense, a converse of Cauchy's theorem and says that if a continuous function $f$, defined on an open set, has zero integral over any closed curve (it is enough to check on rectangles) then it is analytic. We then used this result to prove that analyticity is preserved under uniform limits [BN, Theorem 7.6].

Problems:

Please do problems 10, 11, 13 from [BN, Chapter 7].

(

10-13

)

We first proved [BN, Theorem 7.7] which led us to the Schwarz Reflection Principle.

One way to state this is to say that if a function $f$ is real on a straight line $L$ and analytic on one side of $L$ and continuous on $L$ then the function $f$ can be defined on the other side of $L$ as well, in such a way that it will be analytic on both sides and $L$.

The way this extension is done is as follows: to define $f$ on point $z$, which is on the "other" side of $L$ we give it the value $\overline{f(R_L(z))}$, where $R_L(z)$ denotes the point symmetric to $z$ with respect to line $L$, in other words the reflection of $z$ with respect to $L$ (hence the name reflection principle).

This can be generalized to the case when the values of $f$ on $L$ are not necessatily real but they do belong to another straight line $M$. The reflection principle now becomes $$ f(z) = R_M(f(R_L(z))). $$

Suppose $C$ is a circle of center $a$ and radius $R$ and $z\neq a$ is a point inside the circle. We can define the reflection of $z$ with respect to $C$ to be a point $R_C(z)$ on the half line from $a$ to $z$ at distance $\frac{R^2}{\Abs{z-a}}$ from $a$. This point is called the geometric inverse of $z$ with respect to $C$ and we shall call it also the reflection of $z$ with respect to $C$. This is the right sort of symmetry to extend the Schwarz reflection principle to circles. There is a subtle point though as the center of the circle gets mapped to "the point at $\infty$" by the function $R_C$, so this generalization is best done when we have introduced meromorphic functions.

But we can still describe the extension here: suppose $f$ is an analytic function that maps straight line or circle $A$ to straight line or circle $B$ and is also defined on one side of $A$ as an analytic function (and continuous on $A$). Then $f$ can be extended as an analytic function on the other side of $A$ as well by the formula (here $z$ is a point on the other side) $$ f(z) = R_B(f(R_A(z))). $$ In words, we first reflect $z$ with respect to $A$ to a point on the side of $A$ where $A$ is originally defined, then we compute $f$ at that point and, finally, we reflect that value with respect to $B$.

Problems:

Please do problems 14-18 from [BN, Chapter 7].

(

14-18

)

We first talked about simply connected domains. We did not follow the definition given in the book. Our definition is that an open set $D \subseteq \CC$ is simply connected if every close path in $D$ can be continuously contracted to a point, with the contraction lying always in $D$. We then stated the theorem of Cauchy for simply connnected domains, that if $f$ is analytic in a simply connected domain $D$ and $C$ is a closed curve in $D$ then $\oint_C f(z)\,dz = 0$ (and we do not have to check that $f$ is analytic in the "interior" of $C$, which may be a hard thing to define). This implies that every function $f$ which is analytic in a simply connected domain $D$ has an antiderivative in $D$ (we did not cover this in class, but it is rather easy and the proof in your book [BN, Theorem 8.5] is not hard to read -- we essentialy gave the same proof but for the function $1/z$ instead for a general $f$).

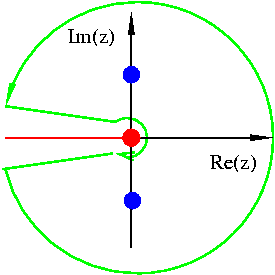

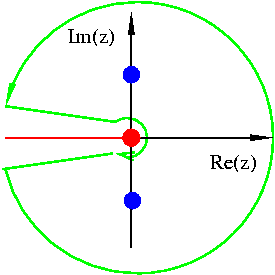

Next we showed that in any simply connected domain not containing $0$ we can define a logarithm, an analytic function $\log z$ which is the inverse of the exponential function, i.e. with $e^{\log z} = z$. This we achieved by taking an antiderivative of $1/z$ in $D$ [BN, Theorem 8.8]. If the goal is to define $\log f(z)$ for an analytic function $f$ in $D$ which does not vanish in $D$ then we can do so by the same process, i.e. taking an antiderivative of the so-called logarithmic derivative of $f$, the function $f'(z)/f(z)$. Having defined a logarithm in a domain allows us to define functions such as $z^{1/2}$ or $z^z$ which are easily expressed using the logarithm and the exponential functions.

We started talking about the classification of isolated singularities of analytic functions ([BN, Chapter 9]) but we shall cover this again on Friday.

Problems:

Please do problems 1, 2, 3, 8, 9 from [BN, Chapter 8].

(

1-3,

8-9

)

We talked about the classification of isolated singularities of analytic functions into (a) removable singularities, (b) poles and (c) essential singularities. We proved the Casorati-Weierstrass theorem (in any neighborhood of an essential singularity of a function $f$ the values taken by $f$ are dense in $\CC$). We also saw that if a function $f$ tends to $\infty$ at an isolated singularity $a$, i.e. if $$ \lim_{z \to a} f(z) = \infty, $$ then $a$ is necessarily a pole of $f$, of some finite integer order $k=1, 2, \ldots$, and, therefore, the growth of the function $f$ as we approach $a$ can only be "of an integer order", meaning that for any sufficiently small $r>0$ there are positive constants $c_1, c_2$, such that $$ \frac{c_1}{\Abs{z-a}^k} \le \Abs{f(z)} \le \frac{c_2}{\Abs{z-a}^k},\ \ \ \text{for all $z$ such that $\Abs{z-a} \lt r$.} $$

We then introduced Laurent series of the form $$ \sum_{k=-\infty}^\infty a_k (z-a)^k, $$ and proved [BN, Theorem 9.8], that each Laurent series converges in an annular domain of the form $$ r < \Abs{z-a} < R, $$ where $r, R \in [0, +\infty]$ are radii of convergence arising for the positive $k$ and the negative $k$ part of the series.

Problems:

Please do problems 1-5 from [BN, Chapter 9].

(

1-5

)

We first proved that every function that is analytic in an annulus can be uniquely written as a Laurent series [BN, Theorem 9.9]. We proved then that the principal part (part with negative powers) of the Laurent series around $a \in \CC$ for a function $f$ that has an isolated singularity at $a$ consists of finitely many terms if and only if $f$ has a pole or removable singularity at $a$, otherwise (essential singularity) it has infinitely many terms. Then we did several examples of analytic functions with isolated singularities for which we found the corresponding Laurent series. Last we proved [BN, Theorem 9.13], that every rational function (quotient of wo polynomials) can be written as a sum of polynomials of $1/(z-z_i)$, where the $z_i$ are the distinct roots of the denominator polynomial.

Problems:

Please do problems 6-10, 12 from [BN, Chapter 9].

(

6-12

)

We defined the residue of a function at a point $a$, with the function being analytic around $a$, as the coefficient of order -1 in the Laurent series of the function around $a$. We saw a few concrete examples of how to calculate the residue. The residue of a function at a point is important as it is the only part of the function responsible for the integral of the function around a point $a$ (this means the contour integral on a small circle around $a$).

We gave the formal definition of the winding number of a curve $\gamma$ around a point $a$ and saw that we can interpret this quantity, originally defined by a contour integral, as "the number of times $\gamma$ winds (turns) around $a$".

We stated and proved the Theorem of Residues of Cauchy [BN, Theorem 10.5]. We saw then how to apply this to compute the number of zeros minus the number of poles of a function enclosed by a simple closed curve [BN, Theorem 10.8]. This is called Argument Principle and saw how to interpret the integral of the logarithmic derivative of $f$ $$ \frac{1}{2\pi i}\oint_{\Gamma} \frac{f'(z)}{f(z)}dz $$ as the winding number of the curve $f(\Gamma)$ around $0$.

Problems:

Please do problems 1, 2, 5 from [BN, Chapter 10].

(

1-2,

5

)

We proved the Theorem of Rouché [BN, Theorem 10.10], which is a great result for helping us count the roots of a function inside a curve by reducing it to counting the roots of a simpler function. We saw several applications of this, e.g. we did [BN, Problem 10.6, (a-c)].

We then completed [BN, Chapter 10] by proving the Theorem of Hurwitz.

Problems:

Please do problems 6, 7, 8 from [BN, Chapter 10].

(

6-8

)

We started looking at techniques for the computation of improper definite integrals on the real line using Cauchy's residue theorem. The first class of integrals we looked at are integrals of the form $$ \int_{-\infty}^\infty \frac{P(x)}{Q(x)}\,dx, $$ where $P(x), Q(x)$ are polynomials, $Q(x)$ never vanishes on $\RR$ and with $\deg{Q} \ge \deg{P}+2$ (this last condition guarantees that the above integral converges). We proved that the above integral, divided by $2\pi i$ equals the sum of the residues of the function $P/Q$ in the upper half plane $\Set{\Im{z}>0}$. We applied this technique to the evaluation of the integral $\int_{-\infty}^\infty \frac{dx}{1+x^4}$.

Next we saw how to deal with integrals of the form $$ \int_{-\infty}^\infty \frac{P(x)}{Q(x)} \sin x \,dx $$ where now, besides the nonvanishing of $Q(x)$ on the real line, we only assume $\deg{Q} \ge \deg{P}+1$. This condition does not guarantee absolute convergence, yet we still proved that this integral is convergent by using integration by parts, a standard method in Analysis to deal with an oscillating factor that is being integrated. We saw the general method for dealing with such integrals but did not have the time to go over an example.

We first did an example for the method shown in [BN, CHapter 11, II.] (the integral $\int_{-\infty}^\infty \frac{x \sin{x} \, dx}{1+x^2}$). Then we did the example $\int_{-\infty}^\infty \frac{\sin{x}}{x}\,dx$ from the book which requires somewhat different treatment. Next, we covered the method of [BN, Chapter 11, III.(A)] and the example $\int_0^\infty \frac{dx}{1+x^3}$.

Problems:

Please do problems 1 (first six), 2, 3 from [BN, Chapter 11].

(

1-3

)

Today we saw how to compute integrals of the form $$ \int_0^\infty \frac{x^{a-1}}{P(x)}\,dx, $$ where $P(x)$ is a polynomial of degree at least 1 with no roots on $\RR$ and $0 \lt a \lt 1$. Then we also saw how to compute integrals of the form $$ \int_0^{2\pi} R(\cos\theta, \sin\theta)\,d\theta, $$ where $R(\cdot, \cdot)$ is a rational function with no singularities on $[-1, 1]$, by converting it to an integral of the form $$ \oint_{\Set{\Abs{z}=1}} f(z)\,dz, $$ for an appropriate function $f$. Last we also saw how to prove that the Fourier Transform of a Gaussian function is also a Gaussian function by using complex integration. This is not in your book but you can read this in § 7 of this document.